You keep hearing about AI agents — but what actually is one, and how hard is it to build? Turns out: not hard at all. In this tutorial, we’ll build a simple AI agent in Python that can call tools, make decisions, and take real actions — in under 100 lines of code.

What Is an AI Agent?

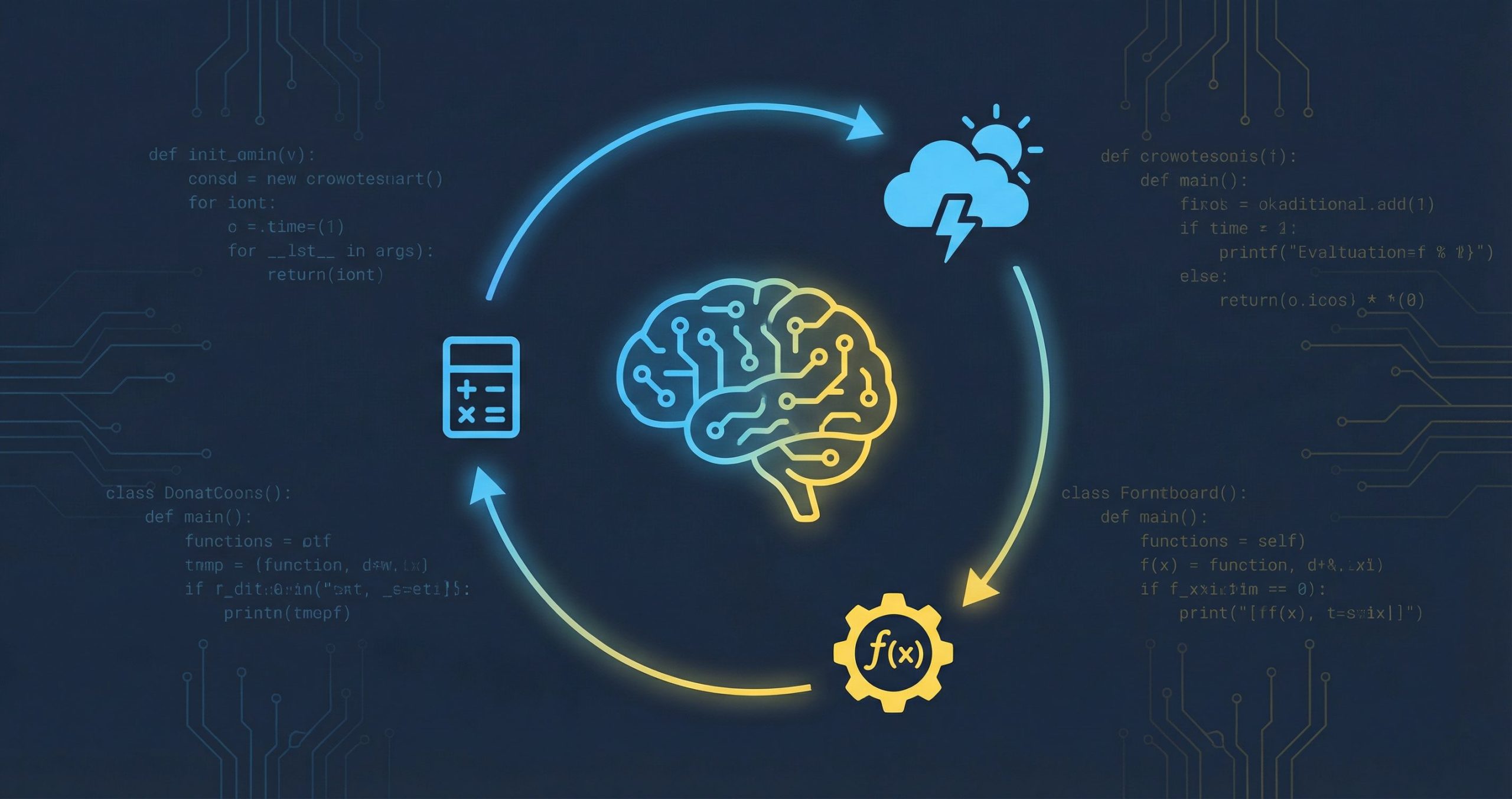

An agent is just an LLM that can do things. A regular chatbot takes your prompt and returns text. An agent takes your prompt, decides if it needs to take an action first (like checking the weather, querying a database, or running a calculation), executes that action, reads the result, and then responds.

The magic ingredient? Tool calls (sometimes called function calling). You give the model a list of functions it’s allowed to use, and it decides when and how to call them.

How Tool Calls Work

The flow is simple:

- You send a message to the LLM, along with a list of available tools (name, description, parameters)

- The LLM reads your message and decides: “Should I call a tool, or just respond directly?”

- If it wants to call a tool, it returns a tool call — a structured JSON object with the function name and arguments

- Your code executes that function and sends the result back to the LLM

- The LLM reads the result and either calls another tool or gives you the final answer

That’s it. The LLM doesn’t actually run your code — it just tells you what to call and with what arguments. Your Python code is the one doing the actual work.

Let’s Build One

We’ll build a tiny agent that can get the current weather and do math. We’ll use the OpenAI API, but the same pattern works with any LLM that supports tool calling (Claude, Gemini, Mistral, local models via Ollama, etc.).

Step 1: Install the SDK

pip install openaiStep 2: Define Your Tools

Tools are just regular Python functions. The trick is describing them in a format the LLM can understand:

import json

import openai

client = openai.OpenAI() # uses OPENAI_API_KEY env var

# --- Tool implementations ---

def get_weather(city: str) -> str:

"""Simulate a weather lookup."""

weather_data = {

"london": "14°C, cloudy",

"tokyo": "26°C, sunny",

"new york": "18°C, partly cloudy",

}

return weather_data.get(city.lower(), f"No data for {city}")

def calculate(expression: str) -> str:

"""Evaluate a math expression."""

try:

return str(eval(expression))

except Exception as e:

return f"Error: {e}"

# --- Tool descriptions (for the LLM) ---

tools = [

{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get the current weather for a city",

"parameters": {

"type": "object",

"properties": {

"city": {

"type": "string",

"description": "The city name"

}

},

"required": ["city"]

}

}

},

{

"type": "function",

"function": {

"name": "calculate",

"description": "Evaluate a math expression",

"parameters": {

"type": "object",

"properties": {

"expression": {

"type": "string",

"description": "A math expression like '2 + 2' or '15 * 7'"

}

},

"required": ["expression"]

}

}

},

]Notice the two parts: the actual functions (get_weather, calculate) and the tool descriptions that tell the LLM what each tool does, what parameters it accepts, and when to use it.

Step 3: The Agent Loop

This is where it all comes together. The agent loop sends messages to the LLM, checks if it wants to call a tool, executes the tool, and loops until the model has a final answer:

# Map tool names to actual functions

tool_functions = {

"get_weather": get_weather,

"calculate": calculate,

}

def run_agent(user_message: str):

print(f"\n{'='*50}")

print(f"User: {user_message}")

print(f"{'='*50}")

messages = [

{"role": "system", "content": "You are a helpful assistant. Use tools when needed."},

{"role": "user", "content": user_message},

]

while True:

response = client.chat.completions.create(

model="gpt-4o-mini",

messages=messages,

tools=tools,

)

message = response.choices[0].message

# If no tool calls, we have the final answer

if not message.tool_calls:

print(f"\nAgent: {message.content}")

return message.content

# Process each tool call

messages.append(message)

for tool_call in message.tool_calls:

name = tool_call.function.name

args = json.loads(tool_call.function.arguments)

print(f"\n→ Calling tool: {name}({args})")

result = tool_functions[name](**args)

print(f"← Result: {result}")

messages.append({

"role": "tool",

"tool_call_id": tool_call.id,

"content": result,

})

The key insight: it’s a while loop. The agent keeps going until the LLM decides it has enough information to answer without calling another tool. This means agents can chain multiple tool calls — for example, checking the weather in two cities and then calculating the temperature difference.

Step 4: Run It

# Simple question (no tool needed)

run_agent("What is Python?")

# Needs a tool call

run_agent("What's the weather in Tokyo?")

# Chains multiple tools

run_agent("What's the weather in London and Tokyo? What's the temperature difference?")Demo Output

Here’s what actually happens when you run the agent:

==================================================

User: What is Python?

==================================================

Agent: Python is a high-level, general-purpose programming language known

for its readability, simplicity, and versatility.

==================================================

User: What's the weather in Tokyo?

==================================================

→ Calling tool: get_weather({"city": "Tokyo"})

← Result: 26°C, sunny

Agent: The weather in Tokyo is currently 26°C and sunny!

==================================================

User: What's the weather in London and Tokyo? What's the temperature difference?

==================================================

→ Calling tool: get_weather({"city": "London"})

← Result: 14°C, cloudy

→ Calling tool: get_weather({"city": "Tokyo"})

← Result: 26°C, sunny

→ Calling tool: calculate({"expression": "26 - 14"})

← Result: 12

Agent: Here's the weather:

- London: 14°C, cloudy

- Tokyo: 26°C, sunny

The temperature difference is 12°C — Tokyo is warmer!Look at that last example — the LLM made three tool calls on its own: two weather lookups and a calculation. It decided what to call, in what order, and then synthesized everything into a clear answer. That’s the power of an agent.

Key Takeaways

- An AI agent is just an LLM + a loop + tool calls

- You define tools as normal Python functions and describe them in JSON

- The LLM decides when and how to call your tools — you just execute and return the result

- The agent loop keeps running until the model has a final answer

- This same pattern scales to complex agents with dozens of tools (database access, API calls, file operations, web browsing — anything you can write a function for)

Want to go deeper? Try adding more tools — a web scraper, a database query function, or a file reader. The pattern stays the same: define the function, describe it for the LLM, and add it to the loop. If you’re curious about how LLMs themselves work under the hood, check out our beginner-friendly guide to building a GPT from scratch in PyTorch.

And if you’re building agents and want to track their runs, tool calls, and errors in production, take a look at AgentLog — it’s observability built specifically for AI agents.

The full code from this tutorial is under 80 lines. Copy it, swap in your own tools, and you’ve got an agent. Happy building.

Tried this with Claude API instead of OpenAI and it works perfectly — just swap the client and model name. The tool calling format is slightly different but the concept is identical. Really demystifies what agents actually are under the hood. Way simpler than I expected.

Finally an agent tutorial that does not require installing 15 frameworks. The breakdown of how tool calls work — the LLM suggests, your code executes — is the clearest explanation I have seen anywhere. Bookmarked this one.